Grammatical Person Representation in Large Language Models

Dec 1, 2025

·

1 min read

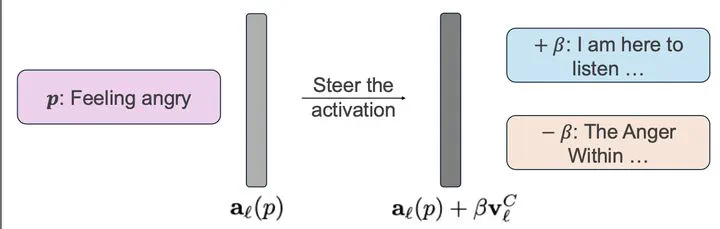

Many interpretability analyses of language models struggle to differentiate between user- and model-oriented perspectives because natural language often switches between first-person (“I”) and second-person (“you”). Our project investigates whether large language models encode grammatical person linearly in their latent representations and how this encoding influences output behavior when we intervene along these directions. In this work, we study how LLMs internally represent grammatical person (the distinction between “I” and “you”) and how this representation relates to the personas they adopt during generation.